AWS and OpenAI Just Made Stateful AI Agents Production-Ready on Amazon Bedrock

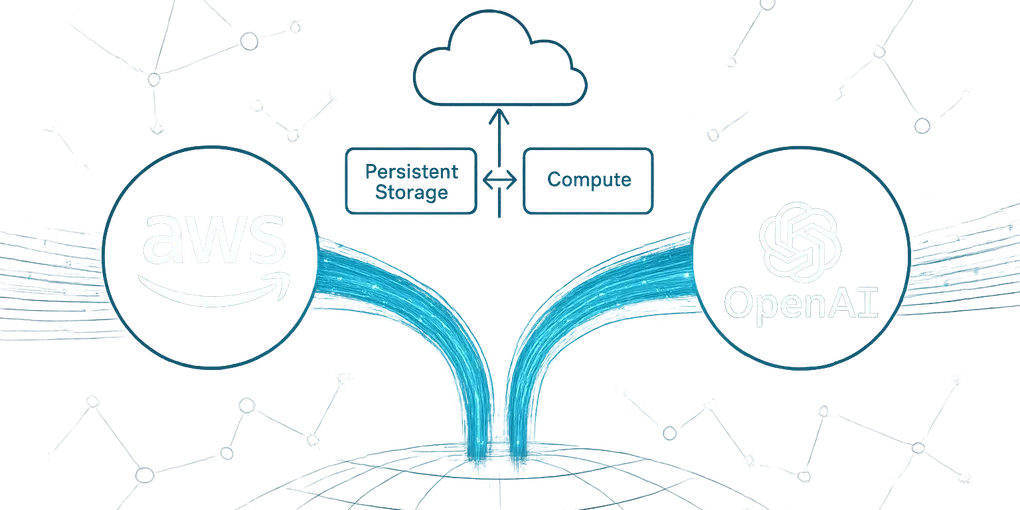

If you’ve been building AI applications on AWS and hitting the wall of stateless, one-shot API calls, that wall just came down. Amazon Web Services and OpenAI announced a co-created Stateful Runtime Environment powered by OpenAI models, available directly on Amazon Bedrock. It’s a meaningful architectural shift — it changes what’s possible with production AI agents as of April 2026.

Key Takeaways

- AWS and OpenAI co-created a Stateful Runtime now available on Amazon Bedrock (April 2026).

- Stateful agents maintain memory across sessions without developer-managed context plumbing.

- OpenAI models are now a first-class option alongside Claude and Mistral on Bedrock.

- Enterprise teams get single billing, IAM/VPC compliance, and multi-model orchestration in one platform.

- Long-running document agents, customer-memory bots, and multi-agent pipelines are now standard Bedrock workflows.

What Does “Stateful” Actually Mean for Builders?

Most AI API calls today are stateless: you send a prompt, get a response, done. The model doesn’t remember anything between calls unless you manually pass conversation history back and forth. That works fine for simple Q&A but falls apart the moment you need an agent to:

- Track progress across a multi-step workflow

- Remember what it did earlier in the same session

- Pick up where it left off after an interruption

- Coordinate with other agents over time

The new Stateful Runtime on Bedrock handles all of this natively. The runtime manages session state, context persistence, and agent continuity so your application code doesn’t have to. You build the logic; the infrastructure holds the memory. For a broader primer on how agentic AI systems work under the hood, see our AI agents explainer.

Why Is This AWS–OpenAI Partnership Significant?

Amazon Bedrock and OpenAI have historically been separate lanes — Bedrock offered its own model catalog (Claude, Titan, Mistral) while OpenAI ran through its own API. This co-creation breaks that pattern. OpenAI models are now a first-class option on Bedrock with deep infrastructure integration, not just a wrapper.

For enterprise teams, this matters for a few reasons:

Single billing and compliance surface. Running OpenAI models through Bedrock means you can use your existing AWS contracts, IAM policies, VPC configurations, and compliance frameworks. You’re not opening a separate OpenAI billing relationship or renegotiating data processing agreements.

Lower latency through co-location. When your app infrastructure and AI runtime live in the same AWS region, you cut round-trip overhead. For agentic workflows that make dozens of sequential API calls, that adds up fast.

Easier multi-model orchestration. Bedrock already lets you mix and match models in a workflow. Stateful OpenAI agents can now sit alongside Claude or Mistral agents in the same pipeline without stitching together different SDKs.

What Gets Easier to Build?

Here’s what this actually unlocks for teams building production AI systems:

Long-running research and document agents. An agent that processes a 50-page procurement document, asks clarifying questions, and returns a structured summary — across multiple interactions, over hours — becomes straightforward to build.

Customer-facing service agents with memory. A customer service agent that remembers a customer’s prior issues across sessions, without your team manually engineering that context management, is now a standard Bedrock workflow.

Automated operational pipelines. Think agents that monitor data, flag anomalies, escalate to human review when needed, and resume processing after human sign-off — all within a persistent session.

Coordinated multi-agent tasks. Orchestrating a team of specialized agents (one for research, one for drafting, one for QA) with shared state is the kind of workflow that used to require custom middleware. The Stateful Runtime is designed to handle that coordination layer. For more on what multi-agent coordination looks like in practice, see our coverage of Claude Code’s multi-agent redesign.

The Real Threshold Being Crossed

The shift from stateless to stateful isn’t just a technical upgrade — it’s the difference between AI as a tool you occasionally consult and AI as infrastructure that actively runs your workflows. Most serious agentic deployments have been blocked by the complexity of managing state at scale. Frameworks like LangChain and LlamaIndex have done a lot to paper over that complexity, but they’re still application-layer solutions.

Building it into the cloud runtime — managed, scaled, and backed by AWS SLAs — removes an entire category of engineering problem. For businesses that have been evaluating whether to build agentic AI internally or wait for the infrastructure to mature: this is a signal that the infrastructure is maturing.

Practical Next Steps

If you’re running workloads on AWS already:

- Check your Bedrock region availability — stateful runtime features typically roll out by region over weeks.

- Audit your current stateless agent flows — identify which ones have brittle context management you’ve built manually.

- Review your IAM policies — stateful sessions create new permission surface area (who can read/write session state?).

- Prototype one workflow — pick the highest-friction agentic use case in your current stack and test it against the new runtime before committing to a migration.

The announcement signals where cloud-native AI infrastructure is heading: less plumbing, more capability out of the box. For teams on AWS, getting comfortable with Bedrock’s agent features now is table stakes for what comes next.

Frequently Asked Questions

What does “stateful” mean for AI agents on Amazon Bedrock?

Stateful means an AI agent can remember what it did earlier in a session, track progress through multi-step tasks, and resume work after an interruption — without you manually feeding it context each time. Previously, every API call was stateless, meaning the agent had no memory of prior steps unless developers built that infrastructure themselves.

What’s actually new about the AWS and OpenAI Bedrock announcement?

AWS and OpenAI co-created a Stateful Runtime Environment built directly into Amazon Bedrock. It handles session memory and state management at the infrastructure level, so developers no longer need to engineer those systems themselves. It also consolidates billing and compliance into a single AWS surface and supports multi-model orchestration with models like Claude and Mistral alongside OpenAI’s.

What kinds of AI applications does this make possible?

It enables long-running document processing agents, customer service bots that remember conversation history across sessions, automated operational pipelines that survive interruptions, and coordinated multi-agent workflows where several AI models hand off tasks to each other. These were all difficult to scale reliably before because state had to be managed manually.

Why was state management such a big problem for AI agents before this?

Traditional AI API calls are stateless — each request is isolated, so the model has no memory of previous steps unless the developer explicitly stores and re-injects that context. At scale, building and maintaining that infrastructure is complex and error-prone. The new Bedrock runtime moves that responsibility to AWS, removing it as an engineering bottleneck.